Needle Points

Chapter III: A new plague descends

In February and March 2020, it became clear that the disaster that had swept through Wuhan was becoming catastrophic in Bergamo. As frontline health care workers were dying in both China and Italy, the virus had also spread throughout Western Europe and arrived in North America. Early reports of the case fatality rate reached over 14.5% in Italy in the spring, and in Spain, Sweden, and other hot spots it was over 11%, devouring the elderly in every affected country. PPE often didn’t exist for frontline health care workers. Bodies were stored in refrigerated trucks. Citizens were told masks would not protect them, and there were no known outpatient treatments. While hospitals could provide oxygen, this was often insufficient, and so victims were put on ventilators, which may have made some cases worse, and was a horrible way to die.

While much of the United States was terrified, there was some light: Dr. Anthony Fauci, the physician-scientist now running the country’s pandemic defense, seemed able to answer most press questions, projected an affable, avuncular persona, and spoke in ways people could understand, which is what the nation required. Even skeptics had hopes: Fauci seemed steady when events took unexpected turns, explaining that we were learning as we went along. He said the lockdown would be for 15 days, to “flatten the curve.” When that didn’t work, he explained why, argued that it should be extended, and much of the nation went along. In a United States exhausted by its hyperpolarized political scene, here was someone who had worked with both parties, advising every president since Ronald Reagan. For those on the right, he could be seen as an employee of and messenger for President Donald Trump; for those on the left, he was a longtime public servant who had headed the same institution, the National Institute of Allergy and Infectious Diseases (NIAID), since 1984, and played vital roles in the fights against AIDS and Ebola. There was a widespread sense that Fauci was the right man at the right time.

But then there were flip-flops on masks: After claiming the science showed that masks were unnecessary, Fauci later said they were absolutely necessary, but wouldn’t be for the vaccinated, until, eventually, they were. There were also disputes about lockdowns: Initially introduced as temporary to flatten the curve, they were later extended to become a new way of life, in order to save lives. But then some states like Florida, which didn’t impose long and severe lockdowns, had lower age-adjusted mortality than states like New York, which did. Then, another issue emerged that was not simply scientific, but also political.

Since the earliest days of the pandemic, many regular people struggled to make sense of its origins. The Chinese Communist Party had claimed the virus emerged from a wet market while denying any connection to virology labs located nearby. There was obviously a cover-up unfolding in China, with arrests of citizen journalists and detentions and disappearances of Wuhan physicians who witnessed the first cases, and who would have ideas about where it started.

Various observers argued that there was reason to consider that COVID may have leaked from the Wuhan Institute of Virology, and perhaps even may have been engineered by gain-of-function (GoF) research, in which a natural virus is made more contagious and lethal, ostensibly to see if the scientists can “get ahead” of nature, and to study how it operates in order to make new vaccines or medications, or for biological warfare. GoF is so controversial that in 2014 President Barack Obama put a moratorium on it. In 2017, Drs. Fauci and Francis Collins, then director of the NIH, who had opposed the moratorium, succeeded in having it lifted.

But Fauci asserted that the scientists who were in a position to judge the COVID situation concluded that its origin was natural. The media followed suit, and called those who thought otherwise “conspiracy theorists.” The New York Times, Washington Post, and others called the possibility of a lab leak a “conspiracy theory” that had been “debunked.”

In the meantime, a master narrative began to emerge: Once upon a time, life was relatively normal and safe, and then the pandemic came, and life as we knew it suddenly changed in awful ways. The only way out, the only path back to a world without COVID, would be to make a vaccine—as quickly as possible. Until then, everyone would have to do their part to “stop the spread,” which meant that basic social functions would have to cease, including school for millions of children. Thousands of small businesses would have to close, and civil liberties rolled back. It would be a difficult time, but eventually we would have the vaccine, and COVID would be over—as long as everyone got it, of course. But then, who wouldn’t want to?

On this point, Bill Gates, of Gavi, the Vaccine Alliance, and the largest private contributor to the WHO, was very direct: “The ultimate solution, the only thing that really lets us go back completely to normal and feel good … is to create a vaccine,” he said.

If you asked researchers or most physicians in the spring of 2020 how long it normally takes to produce a vaccine safe enough to administer to patients, many would have pointed out that the average fast vaccine takes 7-10 years, and that the first vaccine might just be one of several required to end a given crisis—because often the first is not the best.

This seemed too long. Gates predicted that there would be problems moving quickly because companies would have to produce a one-size-fits-all vaccine that could have different effects on different groups, including pregnant women, the undernourished, and people with existing comorbidities. “People like myself and Fauci,” Gates said, “are saying 18 months [to make the vaccine] … If everything went perfectly … there will be a trade-off: We’ll have less safety testing than we typically would have ... we just don’t have the time to do what we normally do.” The solution he noted was that “governments will have to be involved because there will be some risk and indemnification needed.”

In August, that solution was reached. As The Intercept reported on August 28, “an amendment to the PREP Act … stipulates that companies ‘cannot be sued for money damages in court’ over injuries caused by medical countermeasures for Covid-19. Such countermeasures include vaccines, therapeutics, and respiratory devices.” The only exception to this immunity would be if death or serious physical injury is caused by “willful misconduct.” Indemnification for vaccines was, as discussed above, not unique; what was new was that the companies producing them were indemnified before the vaccine was even made and fully assessed—knowing it would all be done faster than ever before.

As the nation agonized over mounting deaths, the race for a vaccine was moving quickly—if too opaquely for some. In September 2020, a number of scientists began openly worrying about the nontransparency of the vaccine trials, and whether this could wind up affecting vaccine hesitancy.

The New York Times ran several articles on this, reporting that AstraZeneca, Pfizer, and Moderna had each withheld their study protocols from outside scientists and the public. Withholding protocols guarantees that outside researchers can’t know how participants are selected or monitored, and how effectiveness or safety are defined, so they can’t really know what exactly is being studied. Pharma companies have traditionally argued that not only the trial patents but the clinical trial data belong to them—that it’s proprietary, even though the studies’ results impact millions. This is part of a kind of “traditional secrecy” in the field. Delaying protocol release conveniently means that it is a company’s press releases, not the verified science, that dominate the public’s all-important initial impression of its product.

That the government’s regulatory agencies go along with all this—it is, in fact, standard practice—doesn’t assuage the public; for many, it makes the whole process appear corrupt. And it doesn’t help that, according to the conflict-of-interest disclosures of the authors of the Pfizer and Moderna vaccine clinical trials, some of the authors are employed by these companies and often have stock options.

The essence of the scientific method is conducting experiments that everyone can objectively see and verify; transparency is the bedrock of experimental science, and the means to ultimately dispel doubt. Moreover, in terms of the scale of public involvement, the experience of the summer and fall of 2020 was unlike any other in the history of medicine. Never before had studies of this size and consequence been run so quickly, or a medicine been produced so quickly to be given to hundreds of millions of people.

These studies were called phase III clinical trials, and if they had positive results, then the vaccine could be given to hundreds of millions of people on the basis of an FDA Emergency Use Authorization. But how long were the patients followed in these two studies after their second dose, to assess safety and efficacy? Two months. On that basis the vaccines were given to over a hundred million people.

One must not confuse the perhaps immaterial fact that the vaccines were made quickly from the arguably more important fact that they were tested on people for only a short time. These vaccines were developed so quickly in part because the new mRNA technology allows quicker production, and because parts of the production lines that in the past were staged out over time were, in this case, set up simultaneously with the help of huge cash infusions. All else being equal, there’s a serious argument that it might be hugely advantageous to be able to produce new vaccines so quickly. “If you can intervene with let’s say a 40% effective vaccine 4 months before you can intervene with an 80% effective vaccine, you save more lives with the 40% effective vaccine that’s delivered 4 months earlier,” Dr. Barney Graham of the National Institutes of Health pointed out. “Being fast in an outbreak setting in some ways is more important than being perfect.”

Still, it was obvious as early as the fall that some testing steps would be skipped. “We’ll have less safety testing than we typically would have,” Gates noted. “We just don’t have the time.”

Must that be a problem? Why, especially during a pandemic, wouldn’t we want to quickly distribute any vaccine that appears to work even somewhat effectively to those who are willing to take on any potential risks that may go with less safety testing? Some people might even decide for themselves that a raging pandemic is a dangerous enough threat to outweigh every other possible concern.

But what we shouldn’t do, if we want to maintain public trust and cohesion, is act as though there is no chance that any legitimate concern could ever possibly emerge, or that we know more than we really do after only two months of study. With complex biological systems, we simply can’t presume that just because we have a fantastic idea for a treatment, the safety we hope for and see at the start will necessarily hold over time.

Take the classic case of thalidomide. It was originally a sedative, used for anxiety, and later tried for nausea. It worked, leading some to “theorize” that it could prevent nausea in pregnant mothers. In practice, once on the market, it did. But it also caused serious birth defects in children. It took longer than nine months, and enough cases, to realize that these side effects came from the drug, and even more time to overcome the drug company’s opposition to the facts.

The same applies to any of the major drugs pulled off the market for causing cancer, heart attacks, and diabetes. They don’t always cause dire consequences immediately, or in everyone. Sometimes these drugs set a process in motion immediately, but it takes scientists a year or many years to pick up the trend in a population at large. Working from first scientific principles and based on what we already know, we can often develop a neat theory about what might work. But because we don’t know what we don’t know, it often doesn’t turn out as we expect. That is why empirical science developed as a way to test our theories. Empirical science is always, by definition, science after the fact.

This is especially important given the specific kind of vaccine that was being approved in the United States—the mRNA vaccine—which was a first-of-its-kind. Instead of exposing a person to the virus itself in attenuated live form (like the MMR) or killed form (like the polio shot or flu shot)—which is how many of the other vaccines we’ve come to know work—in the mRNA vaccine a person is exposed to an artificially made genetic messenger, mRNA, that goes into their cells and directs them to make part of the virus, which then triggers antibodies. Early on in the rollout, both the pharma PR industry and the press emphasized how novel these vaccines were, and how this unique technique would produce a vaccine so quickly. But when some side effects started to emerge, and people got nervous, officials and the companies’ own PR teams changed their message: These techniques were now presented as not new at all, but as having been around a long time. The hesitant notice flip-flops in communication like this. At best, it makes them wonder about the lasting veracity of public health messages; at worst, it makes them deeply suspicious.

Over the course of the summer of 2020, while the clinical trials were ongoing, outside scientists still had no access to what exactly was being measured and hence studied, so there was no external check on or observation of the process, despite much of the research having been funded by government: Marketing and distribution would be done by the government, the government would be providing the customers, and the government would even pay for the consequences of safety problems that might arise. Withholding protocols rather than disseminating them as widely as possible was, under such circumstances, a sign of outlandish chutzpah. And the governmental agencies that are supposed to advocate for the public—in this case, the FDA, CDC, and NIH—countenanced it.

In September 2020, one bit of secrecy was lifted: It turned out that AstraZeneca had stopped its clinical trial twice. The first pause was not even announced; the second one was, but neither the U.K. public nor the FDA nor scientists were immediately told why. Before they ever found out, however, AstraZeneca CEO Pascal Soriot did privately disclose the reason—two cases of serious neurological damage—to the JP Morgan investment bank. To some, this said much about who, exactly, this process was designed to benefit.

“The communication … has been horrible and unacceptable,” vaccine advocate and virologist Dr. Peter Hotez said. “This is not how the American people should be hearing about this.” Scientists started to demand to see the protocols. Hotez and others “criticized obtuse statements released by government officials, including U.K. regulators who he said failed to supply a rationale for resuming their trials.” Government officials and the regulators, who most citizens assume are there to keep these processes honest, seemed instead to be partners in the obfuscation.

In November 2020, the exciting news arrived: We had vaccine liftoff. Phase III trials of the Pfizer and Moderna vaccines were said to be 95% and 94.5% efficacious, as Fauci and the company press releases announced, and the Emergency Use Authorization was granted on the basis of these two-month studies, allowing distribution of the vaccines to millions.

“Efficacious” is the term used to describe how effective a treatment is in the artificial situation of a clinical trial with volunteer patients, a group not always representative of the wider population; “effective” is the term used to describe how a treatment works in the real world. The media quickly assumed the two were the same. To them, hearing that a vaccine was “95% efficacious” meant it was practically perfect, which the press repeated over and over.

But what exactly were the vaccines “efficacious” at doing? Stopping viral transmission? Preventing severe illness, or reducing hospitalization, or ICU admissions? Preventing death? Efficacious for how long? And efficacious in whom? In the elderly, who were most vulnerable to death? Without clear definitions and answers to these questions—typical of much of the coverage—Americans only had a limited idea, really, of what these vaccines had been shown to do in the narrow universe of clinical trials, let alone what they’d do when given to the public. In fact, they didn’t receive answers to a single one of these questions.

Moreover, there was still a cloud of mystery surrounding the trials. After pressure mounted in the wake of the AstraZeneca revelation in September, the four major Western vaccine manufacturers finally released their protocols, each over 100 pages long. After the protocols were released, Peter Doshi, an associate editor at the British Medical Journal who does research into drug approval processes and how results are communicated to the public, tried to sound an alarm: “None of the trials currently underway are designed to detect a reduction in any serious outcome such as hospital admissions, use of intensive care, or deaths,” he said.

Only one of the studies, of the Oxford AstraZeneca, looked at whether vaccinated individuals were less likely to transmit virus by doing weekly polymerase chain reaction (PCR) swabbing. Vaccinated people had lower viral loads, were less likely to have a positive COVID test, and were positive for shorter durations—very good news indeed, though not automatically applicable to the other mRNA vaccine studies. So what were these clinical trial studies that showed 95% and 94% efficacy looking at, if not saving lives and viral transmission?

Consider that researchers can set up a study to examine whether a vaccine prevents a person from experiencing any or all of the following events, sometimes called “endpoints”:

An asymptomatic infection (the patient is carrying the virus, but the case is so mild that they don’t know it, even though it is shown to be present by a positive virus test).

A clinically symptomatic infection that is mild (and might be confused with a common cold).

A clinically symptomatic infection that is moderate.

A clinically symptomatic severe infection that requires hospital admission.

A clinically symptomatic severe infection requiring ICU admission, and even a ventilator.

A clinically symptomatic severe infection that ends in death.

What were the events, or “the endpoints,” that the phase III Moderna and Pfizer studies claimed to be examining? They said they looked at any clinically symptomatic infection “of essentially any severity” as the primary endpoint. But therein lies the rub.

As Doshi explained, “Severe illness requiring hospital admission, which happens in only a small fraction of symptomatic covid-19 cases, would be unlikely to occur in significant numbers in trials. … Because most people with symptomatic covid-19 experience only mild symptoms, even trials involving 30,000 or more patients would turn up relatively few cases of severe disease.”

How few serious cases, in terms of deaths, were there? In the Pfizer trial, not a single person died of COVID-19 in either the vaccine or the placebo group. The report that Moderna gave to the FDA on Dec. 17, 2020, on its trial specifically said it considered death “a secondary endpoint,” and added that “there were no deaths due to COVID-19 at the time of the interim analysis to enable an assessment of vaccine efficacy against death due to COVID-19.” By publication date, one person had died in the placebo group.

Go over that again: In the study period for the two new mRNA vaccines, only one person out of 70,000 died a COVID death. Now ask yourself, without knowing the demographic markers of the trial participants but knowing for a fact that hundreds of thousands of people were dying from the virus: Does this seem to you like an appropriate way to study severe illness? Moderna told the BMJ in August 2020: “You would need a trial that is either 5 or 10 times larger or you’d need a trial that is 5-10 times longer to collect those events.”

When public health officials distrust the public, the public will come to distrust them.

In a talk based on her Lancet article, given to the BMJ’s “COVID19 Known Unknowns: Vaccines” webinar in February 2021, Dr. Susanne Hodgson, National Institute for Health Research academic lecturer in infectious diseases at the University of Oxford, stated: “The current RCTs that are ongoing are … not powered to assess efficacy against hospital admission and death.”

In the same webinar, Doshi presented on the transparency issue. Having read the protocols and then the phase III trial studies of the Pfizer, Moderna, AstraZeneca, and Sputnik (Russian) vaccines, he wanted to check the raw data from the studies in order to verify it—that is, he wanted to see not just final charts, tables, averages, percentages, and conclusions, but to look over the individual cases. Most of the studies had a line in them that claimed such data was available upon request. According to Doshi, he wrote to the drug companies that had authored the studies and asked to see it. But he wasn’t permitted.

“Each time a trial is published there is this data sharing statement and everything sounds good, until you read the fine print,” he said. “Pfizer, for example, says that it is sharing data upon request. Except it is actually not planning to do so for a very long time. I asked. The same for Moderna. The same for the Oxford AstraZeneca and the Russian vaccine. They all said they will be sharing the data, just not yet. And most are tying the data release to the end of the trials. So we have a situation where the vaccines are being administered to the masses but data isn’t being shared because the sponsors say the trials are ongoing.”

Pfizer data, he learned, might arrive in January 2025. Moderna said it may be available once the trial is complete (sometime in 2022). Other companies were similarly vague. To date, approximately 4 billion people have already got these vaccines—many receiving a first-of-its-kind mRNA genetic formulation, without outside sources reviewing the raw study data. Given that the companies won’t release this data in a timely manner, it is reasonable to assume that public health officials in different countries that approved the vaccines have not seen the raw data either, or run verification checks.

Given all this, it is difficult to assuage those who distrust the systems that delivered the vaccines: At least one of these vaccines, the Moderna, was supported by the NIH and NIAID, which may have joint ownership in intellectual property that undergirds the vaccine. That means their budgets stand to benefit from sales, and individual government employees may get royalties for them. Though it would fall to the FDA to officially approve the vaccines, the advice to enact vaccine mandates would come from a small network, and would be based on studies that were authored in some instances by people who are employees of the companies themselves, which were testing their own products. And when a remarkably trusting public and a few scientists requested a look at the raw data, they got stiffed.

One can only imagine how enriched our knowledge would be if it were otherwise—if, to take just one example, the raw data were available and verified by the hive mind of world scientists, who, drilling down, could see for whom the vaccine was most effective, and who was most at risk of serious side effects, in order to follow them longer than two months and to protect those groups of people in the future. The confidence this would have inspired in a vaccine produced so quickly might have been astonishing—a miracle not only of human scientific advancement, but of the human capacity for persuasive communication and the social progress it can generate.

Alas, that’s not what we got. The train was already barreling out of the station. When the first vaccines were rolled out in December 2020, Fauci received his Moderna shot, announcing that he wanted to get publicly vaccinated as a “symbol” for everyone in the country. “I feel extreme confidence in the safety and the efficacy of this vaccine,” he said. As to the question of how sick the patients in the study were, he said: “With regard to Pfizer it was 95% efficacious not only against disease that is just clinically recognizable disease, but severe disease.” And he said much the same was found for the Moderna vaccine: It prevents severe disease.

By the spring of 2021, the master narrative—the necessity of using one main tool, the vaccine, “to vanquish the enemy”—was working brilliantly. Government data from Israel and the U.K. showed the vaccines weren’t just “efficacious” in clinical trials, but also “effective” in the real world. An April 28 article in the Harvard Gazette was titled “Vaccines can get us to herd immunity, despite the variants,” Dr. Ugur Sahin, the chief executive of BioNTech, which developed the mRNA vaccine for Pfizer, was quoted saying that Europe would reach herd immunity in July or August. The virus would no longer be able to spread.

In the U.K., Freedom Day was set for June 21 (later changed to July 19), and the return to normal in other vaccinated countries seemed not far behind. On April 22, Israel, considered the world’s most vaccinated country (except for some even tinier nations), for the first time recorded no daily COVID deaths. Pfizer’s CEO—who called Israel “the world’s lab,” not only because it was highly vaccinated but because it was vaccinated early, giving the world a glimpse of its future—announced in February that the experiment was going marvelously, saying, “current data shows that after six months the protection is robust” and “there are a lot of indicators right now that are telling us that there is a protection against the transmission of the disease.” The U.K., the second most vaccinated large nation, had a terrible death count in January. But on May 10, there was not a single COVID-19 death in all of England, Northern Ireland, and Scotland.

President Biden assured the American people confidently: “If you’re vaccinated, you’re protected. If you’re unvaccinated, you’re not”—reiterating that being vaccinated “is a patriotic thing to do.” This was a riff on CDC Director Dr. Rochelle Walensky’s statement: “If you have two doses of the vaccine, of the mRNA vaccines, you’re protected. You don’t need to wait for a booster, you’re protected.”

Over the spring, Walensky became an increasingly prominent face. In the months since Biden was inaugurated, a slew of officials who had advised the Trump administration were out of the picture—Dr. Robert Redfield (as head of the CDC), Dr. Deborah Birx, and Dr. Scott Atlas—and a new cohort was ushered in. More and more, Walensky became a visible voice of public health.

In April, during a White House press briefing barely four months after distribution of the first vaccine doses began, Walensky announced that the “CDC recommends that pregnant people receive the COVID-19 vaccine.” But if you checked the CDC website that day—as many pregnant women and their physicians of course did—you would have found something different: “If you are pregnant, you may choose to receive a COVID-19 vaccine,” but “there are currently limited data on the safety of COVID-19 vaccines in pregnant people.” In the press briefing, Walensky had cited a study from the New England Journal of Medicine, about which she said: “no safety concerns were observed for people vaccinated in the third trimester or safety concerns for their babies.”

The study did claim that there was no increased instance of fetal death or neonatal death, which was very reassuring. But it was unable to answer one of the main questions many pregnant women are concerned about: Will these new vaccines have adverse effects on my baby’s development after birth? The study’s authors made clear that they didn’t have enough longitudinal data on women in the first or second trimester of pregnancy to draw conclusions about women vaccinated in those two trimesters (when different organ systems develop), and that their study was therefore “preliminary”: “Preliminary findings did not show obvious safety signals among pregnant persons who received mRNA COVID-19 vaccines. However, more longitudinal follow-up, including follow-up of large numbers of women vaccinated earlier in pregnancy, is necessary to inform maternal, pregnancy, and infant outcomes” (emphasis added).

Recall that the vaccine rollout began in December 2020, for older people. This study only looked at safety data on women in various stages of pregnancy from Dec. 14, 2020, to Feb. 28, 2021, a two-and-a-half-month period. Many women become more vigilant in pregnancy about what they eat, and what they put into their bodies. So it should come as no surprise that more than one woman who was either pregnant or trying to conceive began wondering about a question that one of my colleagues asked me: If at the time of the study, the vaccine had only been available for two-and-a-half months, wouldn’t that mean—if it’s still true that human gestation is approximately nine months—that literally no one who had been vaccinated early in pregnancy had yet followed through to a full-term pregnancy?

None of this is to insinuate an opinion about the use of the vaccines in pregnancy; we are here discussing how simplifications of what scientific studies actually show at a particular moment—even when they turn out, ultimately, to be right—can generate distrust. I would venture that what young families wanted to hear was something both reassuring and reflective of whatever trustworthy data was available to date—like “we are working on a longer study, and feel hopeful about it, but for now we at least know if vaccinated in the third trimester, there is little chance the vaccine will lead to a death.” That, I believe, would have quelled anxiety. But the government and its messaging partners chose a different posture—one that suggested certainty when important data was still yet to come. A lesson in human nature: When public health officials distrust the public, the public will come to distrust them.

Take, for example, an article by Kimberly Atkins Stohr, senior opinion writer and columnist for the Boston Globe, who got the Johnson & Johnson vaccine in April, a week before the FDA put a pause on it because of blood-clot complications. As Atkins indicates, the FDA admitting that there might be a problem, as opposed to hiding it, made her more—not less—likely to believe that the institution is on top of monitoring the vaccines. “I want others to view this pause not as reason to doubt the drug, but a reason to believe in it,” she writes.

The mainstream media in the United States also often downplayed potential problems, and even demonized those who took them seriously—portraying white Christian Republicans as the last redoubt of COVID vaccine skepticism in America. But if white Americans in red states have had high rates of hesitancy, African Americans and Latinos have too. As we’ve seen in the case of African Americans, hesitancy is based at least in part on well-earned distrust. In the U.K., in March 2021, vaccination rates were very high in the “white British” group (91.3%), and British Christians had the least hesitancy, whereas vaccination rates were lower in the Black African and Black Caribbean communities (58.8% and 68.7% respectively), and among Muslims, Buddhists, Sikhs, and Hindus. In Canada the typical vaccine hesitant person is a 40-year-old woman who tends to vote Liberal.

A January Gallup poll showed that 34% of U.S. frontline health care workers said they did not plan to get vaccinated, and an additional 18% were “not sure” what they would do. Given the WHO’s own definition of the “vaccine hesitant”—people who delay or are reluctant to take a vaccine—one could say that 52% of frontline U.S. health care workers were vaccine hesitant at the beginning of the year. It was hard to argue that these were people who got all their information from a few rancid conspiracy websites. In fact, many of these professionals are vaccinated for other illnesses. Nor can we argue that frontline workers are overly anxious and cowardly; many are exposed to active COVID regularly.

At other times, we are told that the hesitant are only those with the least education. But a Carnegie-Mellon and University of Pittsburgh study showed that “by May [2021] PhDs were the most hesitant group.” In May, Sen. Richard Burr of the U.S. Senate Committee on Health asked Fauci how many employees of the NIH, the nation’s premier health sciences research institution, had been vaccinated. “I’m not 100% sure, Senator, but I think it’s probably a little bit more than half, probably around 60%,” he said. The senator asked the same question of Dr. Peter Marks, director of the Center for Biologics Evaluation and Research at the Food and Drug Administration, about the FDA’s employee vaccination. “It’s probably in the same range,” he answered.

In studies in the West, the hesitant repeatedly express, as the top reason for their reluctance to get vaccinated, concerns about what we might call “future unknown effects.” In a May study of Britain, for instance, 42.7% cited this as their biggest fear. The hesitant were not particularly concerned about trivial short-term side effects like sore arms, fatigue, or a passing fever or headache. Only 7.6% were distrustful of “vaccination” generally. In the United States, a multi-university study of over 20,000 people found safety concerns, or uncertainty of the risk, as the top reason given for vaccine hesitancy—59%. Only 33% agreed that vaccines are thoroughly tested in advance of release. The authors reported “massive differences between the vaccinated and unvaccinated in terms of their trust of different people and organizations,” including the CDC and FDA. An IPSOS-World Economic Forum survey of 15 countries showed that in all 15 countries, the leading reason the reluctant gave was fear of side effects, exceeding all other concerns by far. In all countries surveyed, the number of people who said they were “against vaccines” (i.e., the anti-vax position) was generally a minor fraction of those who hadn’t yet been vaccinated.

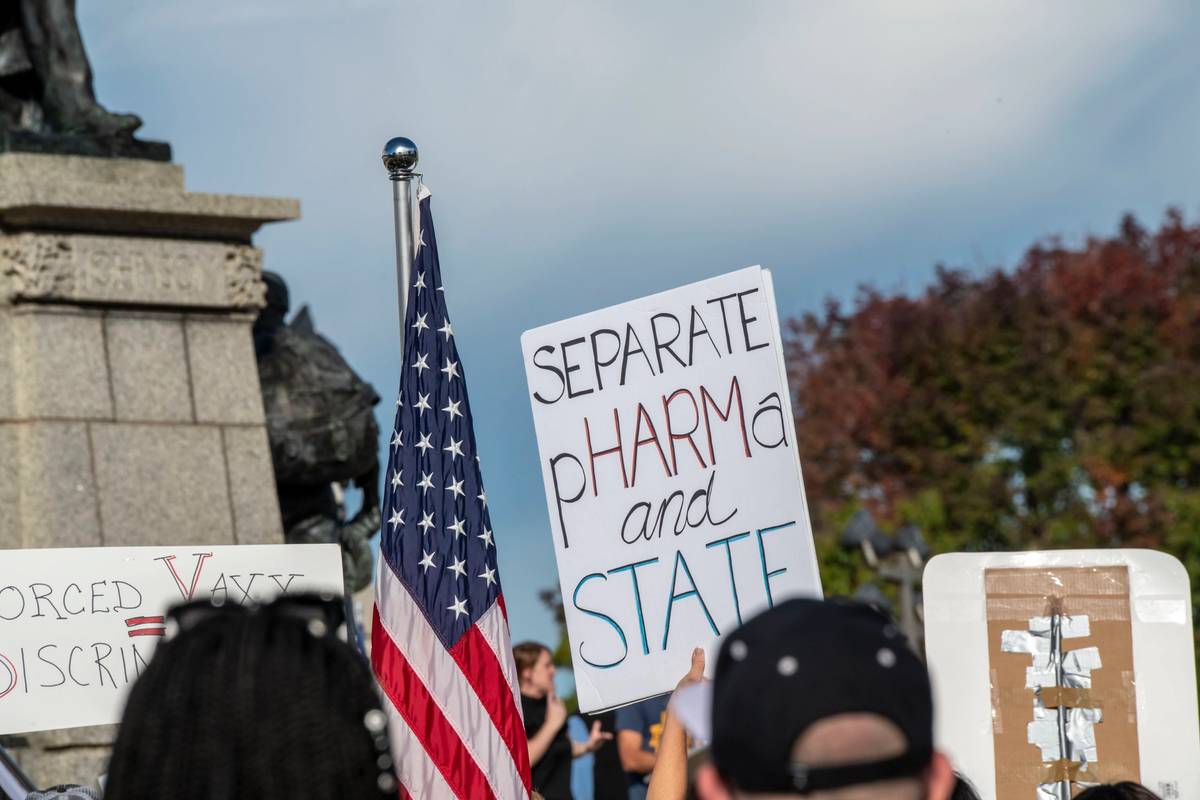

A common theme in France, Britain, and the United States, in fact, is distrust of the vaccine troika—Big Pharma, government and public health, and the health care industry—and an insistence that individuals should have the right to decide whether to get vaccinated. These similarities are worth paying attention to, because they suggest that the attempt to explain the phenomenon by using the group identifiers American media is so fond of—sex, race, religion, and political affiliation—falls short, and shifts attention away from the real issues creating distrust.

On May 11, Fauci appeared in front of a Senate hearing. “The NIH and NIAID categorically has not funded gain-of-function research to be conducted in the Wuhan Institute of Virology,” he said. Yet, in a circumvention of the Obama administration’s 2014 moratorium, and to the disapproval of many in the U.S. scientific community, Fauci’s agency did fund a U.S. company called EcoHealth Alliance, which then facilitated GoF research in collaboration with the Wuhan Institute of Virology. Indeed, from June 2014 to May 2019, Fauci’s agency funded EcoHealth and Peter Daszak—a well-known GoF researcher who subcontracted the grant to the Wuhan lab, where GoF research on bat viruses was conducted and led by Dr. Shi Zhengli—and which wasn’t subject to the U.S. government moratorium.

Dr. Francis Collins, then head of the NIH, had told the House Appropriations Subcommittee that the NIH did not fund GoF in Wuhan. But later, after Fauci reversed his prior claim and said it was possible, Collins also backtracked. “We of course do not have internal insight as to what was going on in the Wuhan Institute of Virology,” he said. Both reversals came only after the plausibility of the lab leak theory started to gain mainstream acceptance, and public pressure mounted.

While Fauci’s denial in the Senate might have been technically accurate, it was misleading: Neither agency directly funded this kind of research, but did do so through a third party. As it turned out, Fauci himself wrote in 2012 that he, like GoF critics, could imagine “an important gain-of-function experiment involving a virus with serious pandemic potential,” whereby “an unlikely but conceivable turn of events” leads to an infection of someone in the lab “and ultimately triggers a pandemic.” Nonetheless, he wrote, for “the resulting knowledge” such research might yield, it was worth the risk.

In June, the question of what Fauci knew and when he knew it came up in his emails, which showed that, although he denied to Congress that his organization funded experiments at the Wuhan Institute of Virology, it had. On Feb 1, 2020, Fauci sent two emails to his staff about a “gain of function” study the NIH had approved, in which he referred to “SARS Gain of Function.” His denial of NIH involvement ultimately proved unconvincing, since funding of it was already part of the NIH committee and grant paper trail. Shi Zhengli, the head of the Wuhan Institute of Virology, co-authored a paper about constructing a superlethal virus, which appeared in Nature Medicine in 2015, and specifically thanked the NIH and EcoHealth for funding her work. A 2017 research article, also with Shi Zhengli as co-author, not only qualifies as GoF research, but “epitomizes” it—and specifically states that it was funded by an NIH-NIAID grant.

All this was important because it was part of the larger story that the public was following. Learning that the agencies and officials charged with leading Americans out of the pandemic in fact had links to a Chinese lab with a history of safety violations, and which also appeared to be involved in dangerous experiments that might be linked to the outbreak in Wuhan, was for many profoundly unsettling.

Meanwhile, the enmeshment between the FDA and pharma was becoming more relevant. In June it was announced that Stephen Hahn, who had led the FDA from Dec. 17, 2019, until Jan. 20, 2021, during which time the agency approved the Moderna and Pfizer vaccines, became the chief medical officer of Flagship Pioneering, the venture capital firm that launched Moderna in 2010 and now owns $4 billion of Moderna stock. On June 27, Scott Gottlieb, who headed the FDA before Hahn, joined the board of Pfizer.

On June 3, three scientists from an FDA advisory committee—Dr. Aaron Kesselheim, professor of medicine at Harvard Medical School, Joel Perlmutter, M.D., a neurologist at Washington University in St. Louis, and David Knopman, M.D., a neurologist at the Mayo Clinic—resigned because of the way an Alzheimer’s drug, Aduhelm, was approved. In a letter, Kesselheim claimed that the authorization of Aduhelm—a monthly intravenous infusion that Biogen has priced at $56,000 per year, which some worry could bankrupt Medicare—was wrong “because of so many different factors, starting from the fact that there’s no good evidence that the drug works,” that it was “probably the worst drug approval decision in recent U.S. history,” and that this “debacle … highlights problems” with the FDA advisory committee relationship.

Across the world, journalists, political activists, medical professionals, whistle-blowers and human rights defenders who expressed critical opinions of their governments’ response to the crisis have been censored, harassed, attacked and criminalized.

It’s worth translating this episode into plain English: In the middle of the biggest vaccine rollout in U.S. history, which the government determined to be the only way out of the pandemic, but which also faced stiff headwinds of deep-seated popular hesitancy, the FDA approved a drug that would line a pharmaceutical company’s pockets with billions of taxpayer dollars, even though studies showed the drug did little but raise false hopes.

Kesselheim wasn’t being rash, as it was apparently the second time he had seen this kind of thing up close. In 2016, the director of the FDA’s Center for Drug Evaluation and Research, Dr. Janet Woodcock, approved a drug called eteplirsen over the objections of all the main FDA scientific reviewers. The grounds for the approval were not that patients got better—they didn’t. Rather, a kind of lab value, which can function as a “biomarker” (or indicator of disease), improved—another pharma trick. That was taken as good enough evidence to approve the drug. As Kesselheim and co-author Jerry Avorn later warned in The Journal of the American Medical Association, “speeding drugs to market based on such biomarker outcomes can actually lead to a worse outcome for patients.”

Soon after Kesselheim’s departure in June, the FDA’s two top vaccine officials announced they were also leaving. Reports explained that Dr. Marion Gruber, director of the FDA Office of Vaccines Research & Review and a 32-year agency veteran, and Dr. Philip Krause, a 10-year veteran, were leaving because of outside pressure by the Biden administration to approve boosters before the FDA had completed its own approval process. Meanwhile, Pfizer, doing more “science by press release” (a technique that often jacks up a company’s stock), was calling for boosters while “hailing great results with COVID-19 boosters and shots for school-age children.”

In a piece in the Lancet on Sept. 13, Gruber, Krause, and multiple international colleagues raised a red flag about pushing through a booster in the general population:

There could be risks if boosters are widely introduced too soon, or too frequently, especially with vaccines that can have immune-mediated side-effects (such as myocarditis, which is more common after the second dose of some mRNA vaccines, or Guillain-Barre syndrome, which has been associated with adenovirus-vectored COVID-19 vaccines [like the AstraZeneca, or Johnson & Johnson]. If unnecessary boosting causes significant adverse reactions, there could be implications for vaccine acceptance that go beyond COVID-19 vaccines. Thus, widespread boosting should be undertaken only if there is clear evidence that it is appropriate.

The Pfizer study was surprisingly tiny: Only 306 people were given the booster. As vaccine researcher David Wiseman (who did trials for rival Johnson & Johnson) pointed out at the FDA meeting, “there was no randomized control” in the Pfizer study. The subjects were younger (18-55) than the people who are most at risk of COVID death or serious illness, and were followed only for a month, so we didn’t actually know how long the booster would last, or if adverse events might show up after the 30 days. They were not followed clinically, so there was no information on infections, hospitalizations, or deaths. Rather, only their antibodies were measured—precisely the kind of shortcut that was taken with eteplirsen.

The study was too small, and the FDA panel held two votes on approval. In the first, it voted overwhelmingly (16 to 2) against approving Pfizer boosters for all ages; in the second vote, the panel supported boosters only for people over 65 or special at-risk groups.

And yet, in mid-August, Biden began publicly supporting boosters for all. Why? On Sept. 16, the Los Angeles Times reported that the president was following the advice of Fauci and the NIH, with the help of Dr. Janet Woodcock—the same FDA official who overrode FDA reviewers in the eteplirsen incident. Woodcock was by that point acting FDA commissioner, and was going around the FDA committee once again.

It was not only the Pfizer booster study that was weak. A New England Journal of Medicine study, based on Israeli Ministry of Health data, claimed that third-shot boosters give 11 times the protection of two. The entire study lasted only a month, and thus showed it was protective for that period, but not whether it would last as long or longer than the second shot’s protection.

During the spring of 2021, another wrinkle had emerged. Along with the widespread attacks on scientists who had criticisms of the simplified master narrative (including ones from major universities like Harvard, Yale, Stanford, Rockefeller, Oxford, and UCLA), many average Americans learned that certain major stories weren’t as widely known as they might have been, thanks in part to censorship by Big Tech.

In May, Facebook announced that it would no longer censor stories about the lab leak theory, which was how many people found out that it was in fact a viable scientific theory in the first place. (Facebook’s idea of transparency is telling you when it’s stopped censoring something; the same goes for YouTube.) But in July, the WHO itself admitted that it had been too hasty in ruling out a lab leak. (Nicolas Wade’s excellent May 2 article, by contrast, showed the technical virological reasons for why the virus might well have come from GoF research.) We also learned more about Big Tech’s motives when it was revealed that Google’s charity arm had funded the same GoF researcher that the NIH had funded—Peter Daszak of EcoHealth. At times, Big Tech’s censorship of “misinformation” coincides with its financial interests: Amazon, which has banned (and unbanned) books critical of the master narrative, has been looking into developing a major pharmacy division.

Meanwhile, three U.S. medical boards—the American Board of Family Medicine, the American Board of Internal Medicine, and the American Board of Pediatrics—went beyond censorship by threatening to revoke licenses from physicians who question the current but shifting line of COVID thinking and protocols. This forced doctors who had any doubts about the master narrative to choose between their patients and their livelihoods.

Things got so bad globally that Amnesty International eventually issued a report on this crisis: “Across the world, journalists, political activists, medical professionals, whistle-blowers and human rights defenders who expressed critical opinions of their governments’ response to the crisis have been censored, harassed, attacked and criminalized,” it noted. The typical tactic, the report’s authors say, is “Target one, intimidate a thousand,” whereby censors justify these actions as simply banning “misinformation” and “prevent[ing] panic.” The report goes on: “Evidence has shown that harsh measures to suppress the free flow of information, such as censorship or the criminalization of ‘fake news,’ can lead to increased mistrust in the authorities, promote space for conspiracy theories to grow, and the suppression of legitimate debate and concerns.” Censorship nourishes the weed it purports to exterminate.

It is, of course, vital that public health officials be able to lead in a crisis, convey consistent messages, and even ask citizens to change their behaviors. But the only way public health can legitimately ask for such changes, is because the policies it recommends are based on a scientific process that is solid enough to withstand scientific criticism and debate. Why else should anybody listen? Science is not itself dogma or an authoritarian discipline, but the opposite: a process of critical inquiry, and the method requires ongoing debate about how to interpret new data, and even what constitutes relevant data. Science, as the Nobel Prize winning physicist Richard Feynman pointed out, requires questioning assertions:

Learn from science that you must doubt the experts … When someone says science teaches such and such, he is using the word incorrectly. Science doesn’t teach it; experience teaches it. If they say to you science has shown such and such, you might ask, “How does science show it—how did the scientists find out—how, what, where?” Not science has shown, but this experiment, this effect, has shown. And you have as much right as anyone else, upon hearing about the experiments (but we must listen to all the evidence), to judge whether a reusable conclusion has been arrived at.

Note how emphatic Feynman is that it’s not just the few who conduct the experiments, or even just “the experts,” who have a right to discuss and judge the matter. This is especially true in public health, because the field is so broad and composed of many disciplines, from those that deal narrowly with viruses to those that deal with mass behavioral changes.

When public health and allied medical and educational organizations censor scientists and health care professionals for debating scientific controversies—thus giving the public the false impression that there are no legitimate controversies—they misrepresent and grievously harm science, medicine, and the public by removing the only justification public health has for asking citizens to undergo various privations: that these requests are based on a full, unhampered, and open scientific process. Those who censor or block this process undermine their own claim to speak in the name of science, or public safety.

If we didn’t get to have a properly open scientific process, what did we get instead? Government enmeshment with legally indemnified corporations, public health officials misleading Congress, multiple honest regulators leaving the FDA because of inappropriate approvals, FDA heads taking Big Pharma jobs directly related to products they had just been involved in approving, a possible lab leak that couldn’t be discussed as such for more than a year so that it couldn’t be clearly disconfirmed, faceless social media platforms admitting that they control what we see and don’t see, and institutional censorship of many kinds.

If you were trying to create the perfect conditions for public skepticism about vaccines in the midst of a pandemic, could you have done any better than this?

Over the summer, the master narrative started to show cracks. By Aug. 18, Israel had the world’s third-highest number of new cases per capita. The Health Ministry retroactively released numbers showing that by mid-summer the Pfizer vaccine, which had been used in Israel extensively, was only 39% effective in preventing COVID infections, though much more effective in preventing severe disease. But additional data showed that, at a time when 62% of the entire Israeli population had been vaccinated, over 60% of Israel’s 400 hospitalized COVID cases were patients who had been fully vaccinated. This meant the vaccine was much more leaky than expected.

By Sept. 14, Israel’s Health Ministry Director General Nachman Ash reported that the country, even more heavily vaccinated than it had been in the summer, with 3 million (mostly elderly) of its 9 million citizens already having had a third shot, was now recording 10,000 new COVID cases a day. “That is a record that did not exist in the previous waves,” Nachman said. It was also the highest number of COVID cases per capita of any country, beating out Mongolia and making Israel the “COVID capital of the world just months after leading the charge on vaccines.”

Many argued correctly that, yes, these breakthrough cases do occur, but they are usually mild, and the vaccines are very good at protecting people from severe illness and death. But then conflicting statistics began to emerge. Israeli hospitals were so overloaded they were turning away COVID patients. Four hundred died in the first two weeks of September. Hospital staff were worn out, and in a traumatic state, with one hospital director describing the situation as “catastrophic,” adding that “the public knows absolutely nothing about it.” Israeli Ministry of Health statistics from August showed that of those deaths that had been classified, more than twice as many who died were fully vaccinated (272) as opposed to those who were not vaccinated (133). By late September, the data was in for the fourth wave, and Dr. Sharon Alroy-Preis of the Ministry of Health revealed to the FDA Vaccine Advisory Committee that “what we saw prior to our booster campaign was that 60% of people in severe and critical condition were immunized, doubly immunized, fully vaccinated and as I said, 45% of the people who died in the fourth wave were doubly vaccinated.”

Israeli vaccine czar Salman Zarka doubled down, and said the country now had to contemplate a fourth dose in another five months: “This is our life from now on, in waves,” he said. Israeli Prime Minister Naftali Bennett echoed this on Sept. 13, blaming six patients who were hospitalized because they “were not fully vaccinated”—by which he meant they had only had two jabs. Divisive terms are easily turned on those who recently used them: Now, the stigma that attended “the unvaccinated” also applied to the vaccinated-but-not-vaccinated-enough.

Throughout the pandemic, Israel had extensive lockdowns. In contrast, Sweden became famous for never having locked down. Israel and Sweden have about the same size population (9 million and 10 million, respectively), and have almost identical rates of double-vaccinated people, if you take in all ages including children (63% Israel, 67% Sweden). If anything, Israel has the edge over Sweden because 43% of Israelis are also triple vaccinated. Yet the difference in the number of hospitalized patients is staggering. For the week of Sept. 12, 2021, Israel had 1,386 COVID hospitalizations, which was four times that of Sweden (340). Israel had a rolling seven-day average of 2.89 deaths per million, compared to the much lower number of deaths in Sweden (0.15).

What can account for this? Many argue that because Sweden (where public health works on a voluntary, participatory basis) never locked down, many more people there were exposed and got natural immunity. The Swedes had hoped to protect the most vulnerable in nursing homes, which they failed to do because of poorly trained staff—but in this they were no different from most Western nations that did lock down. Sweden also suffered more deaths per 100,000 than Israel overall. But through the summer of 2021 Sweden dropped to about 1.5 deaths a day from COVID. Its hospitals were never overwhelmed, suggesting that, once Sweden’s natural herd immunity was established, combined with its vaccines, it was now more protective than Israel’s largely vaccine-based immunity.

This wasn’t what the master narrative had promised. Israel was the world’s lab experiment because, being so early to complete a vaccine rollout on a large scale (about three months ahead of the United States), it was supposed to be a glimpse of everyone else’s future. Its people did seem to be among the first to break free of COVID. But they were now the first to show that the vaccine could wane. It’s not that the vaccinated in the United States weren’t doing better than the unvaccinated in terms of hospitalization for COVID; they definitely were. The fear, rather, was that this might only prove to be a short-term benefit.

In the summer, the CDC, behind on reporting its own U.S. data in real time, had been advised that the Pfizer vaccine was leading to breakthrough cases in the vaccinated in Israel. But it did not share this hole in the master narrative with outside public officials until one month later, as The Washington Post reported. “What is very concerning is that we’re not seeing the data … it needs to come out,” said former head of the CDC Tom Frieden. “What you can criticize the CDC for, validly, is why aren’t you talking about the studies you’re doing of breakthroughs?” Because there had been such a lag time, some people wondered if the CDC was hiding something. “And these are the people who are potentially friendly to CDC,” said Frieden, “so you know you’re in trouble when even your friends are suspicious of your motives.”

In the United States, a Mayo Clinic study found the Pfizer vaccine was 42% effective at stopping people from getting infected between January and July. In the U.K., nearly 50% of new COVID cases in the summer were among the vaccinated; each day there were about 15,000 new symptomatic cases in people who had been partially or fully vaccinated. As of July 15, new cases among the unvaccinated (17,581) were falling, and new cases among the fully or partially vaccinated (15,537) increasing, and set to overtake the unvaccinated.* According to the CDC, of the 469 attendees at Provincetown, Massachusetts celebrations in July that tested positive for COVID, 74% had been fully vaccinated. Scientists determined that those with such vaccine breakthrough infections can carry viral loads as big as infected unvaccinated people. Vaccinated people were not just infecting others; they were also clearly not completely immune themselves, though perhaps they were infectious for a briefer period.

The CDC also emphasized this study to support its new policy of asking the vaccinated to wear masks. On CNN, Wolf Blitzer asked Walensky if she got the messaging wrong, and hadn’t been nuanced enough. She answered that breakthrough infections tended to be mild. Blitzer then asked whether those who are vaccinated and had breakthrough infections could pass the virus on to older or more vulnerable people. Walensky answered: “Our vaccines are working exceptionally well. They continue to work well for delta, with regard to severe illness and death, they prevent it. But what they can’t do anymore is prevent transmission.” She said so to suggest to people who were vaccinated that if they were going home to people who were immunosuppressed, or frail, or with comorbidities, they should wear a mask. It was a nuanced response, and admitted a problem. A performance like that might have, because it was honest, enhanced vaccine confidence.

Unfortunately, the mainstream media was so overcommitted to a master narrative that promised 95% effectiveness for the vaccines (which, it also believed, implied stopping transmission) that it was caught off guard. Instead of asking whether scientists had compared the infectiousness of the vaccinated with that of the unvaccinated, the media took Walensky’s statement to mean that vaccinated people with breakthrough infections were just as likely to infect others as those who were not vaccinated and now had COVID. In this way, the episode transmitted more reasons for the vaccine hesitant to have doubts.

Internal documents showed that at this point the CDC was scrambling to change its messaging, moving from the master narrative simplification that “vaccines are effective against disease” to the idea that vaccines are essential because they protect against death and hospitalization. The agency even changed its official definition of what a vaccine does from producing immunity to a specific disease to producing protection from it.

The FDA had originally said that a vaccine less than 50% effective (defined as reducing the risk of having to see a doctor) would not be approved by regulators. Now something that appeared to the public to be significantly less effective was being not just approved but mandated: According to Israel’s Health Ministry, the Pfizer vaccine data showed that in those who were vaccinated as early as January (about five months prior), it was only 16% effective. A large study in Qatar also showed the vaccine waning at five months; in the United States, a Mayo Clinic study found the Pfizer vaccine had dropped to 42% effectiveness, while the CDC found it dropped to 66%, in just under four months of use. U.S. statistics showed that the vaccinated were still overall far less likely to get infected than the unvaccinated, or to get serious illness. But Israel had been vaccinated earlier than the United States. So what lay ahead for America?

It is noteworthy that this was the moment U.S. government officials and the media chose to assert, soon on a daily basis, that the country was now in “a pandemic of the unvaccinated,” even though it was now clear that the vaccinated could get infected and transmit the virus. Every day, famous Americans including entertainers, athletes, and politicians who had been doubly vaccinated were having “breakthrough” infections. The message that “this is only an epidemic of the unvaccinated … is falling flat,” noted Harvard epidemiologist Michael Mina.

By this point, the hesitant were no longer the only ones who had doubts. There were many anecdotal reports of great worry about breakthrough cases among the vaccinated (including among those who put much faith in vaccines because their immune systems were compromised by age or illness). Headlines about waning vaccines expressed despair that this pandemic might never end.

Instead of addressing how this disappointment might affect people, U.S. public health talking heads and Twitter-certified human nature experts turned now to behavioral psychology, a very American form of psychology, to deal with the crisis—treating their fellow citizens like children or lab rats to be given rewards when “good” and punishments when “bad.” Some seemed to relish telling people that if they didn’t just do what the experts told them to do, they’d lose their jobs, their place in school, or some other basic need, like mobility.

Other, more data-driven thinkers, including pro-vaccine physicians like Eric Topol—head of Scripps Research and a man who regards the production of the mRNA vaccine as “one of science and medical research’s greatest achievements”—now seemed quite concerned about the Israeli data. Topol assembled many articles showing how vaccinated populations still fare much better relative to unvaccinated populations in the United States. But he also pointed out that breakthrough infections can’t just be written off as simply caused by the new delta variant escaping vaccine protection. Israeli data showed the potency of the vaccines was fading after five months, contrary to what Pfizer claimed. Thus, the data showed that the earlier one was vaccinated, the less protection one had against delta. That finding was crucial, because it meant that the new wave in Israel was not simply caused because a new variant came along. The vaccines were losing potency over time.

Fauci and Surgeon General Vivek Murthy stuck to their guns, continuing to emphasize to the public that the vast majority of all COVID deaths—99.2% according to Fauci and 99.5% according to Murthy—were among the unvaccinated, a narrative that was picked up by news outlets, which started reporting obsessively about states with high unvaccinated rates and filling the news cycle with one story after another about stupid, retrograde Americans succumbing to COVID, their final wish not being for those they loved but for their medical practitioner to broadcast to the world their vaccine regret.

But, as David Wallace-Wells showed on Aug. 12 in New York Magazine, Fauci’s and Murthy’s numbers were not rooted in what was currently happening in America; they were instead based on the COVID death data from Jan. 1, 2021, to date. If you think this through, you’ll see what’s obviously wrong: For the first months of the year, few Americans were yet vaccinated, so of course most deaths would technically be among “the unvaccinated.” “Two-thirds of 2021 cases and 80% of deaths came before April 1, when only 15% of the country was fully vaccinated,” Wallace-Wells wrote, “which means calculating year-to-date ratios means possibly underestimating the prevalence of breakthrough cases by a factor of three and breakthrough deaths by a factor of five.” What we desperately needed was a comparison of vaccinated to unvaccinated people by each month. But, as Wallace-Wells noted grimly: “Unfortunately, more accurate month-to-month data is hard to assemble—because the CDC stopped tracking most breakthrough cases in early May.”

Wallace-Wells cited a New York Times analysis that claimed the vaccines were working to suppress severe outcomes from COVID infection by more than a factor of 100 for some states. But as Topol told Wallace-Wells, “The breakthrough problem is much more concerning than what our public officials have transmitted …. We have no good tracking. But every indicator I have suggests that there’s a lot more under the radar than is being told to the public so far, which is unfortunate.” The result, Topol said, was a widening gap between the messaging from public health authorities and the meaning of the data emerging in real time. “I think the problem we have is people—whether it’s the CDC or the people that are doing the briefings—their big concern is, they just want to get vaccinations up. And they don’t want to punch any holes in the story about vaccines. But we can handle the truth. And that’s what we should be getting.”

On Aug. 23, FDA approval of the Pfizer vaccine came through. It was based on the same patients who were in the study that previously included only two months of follow-up, but which now had six months of follow-up. With the approval, Pfizer officially stopped the randomized control trials and informed the controls they never got the vaccine. Now that they know they are not vaccinated, the controls may well choose (or be mandated) to get vaccinated, so we won’t be able to follow them as a control group any more. That means the only randomized control trials we have of these vaccines are just six months long. Should some independent party—not a drug company—want to do a new RCT of the vaccine, they will find it almost impossible to do so, because it will be hard if not impossible to find people who were not vaccinated, or not already exposed to COVID.

This is especially important because we don’t yet—we can’t yet—have any good randomized control trial data to rule out long-term effects. Vaccine supporters, including government officials, will say: “There’s not been a serious side effect in history that hasn’t occurred … within six weeks of getting the dose.” But, as Doshi and others argue, there are examples of long-term problems that come to light after two months (for example, Doshi points out that it took nine months to detect that 1,300 people who received GlaxoSmithKline’s Pandemrix influenza vaccine after the 2009 ‘swine flu’ outbreak developed narcolepsy thought to be caused by the vaccine).

Myocarditis—inflammation of the heart tissue—is a rare but real side effect in young males (about ages 16-29) that did not show up in the two-month long trials that led to the Emergency Use Authorization, even though those studies included males as young as 16. It was not generally recognized by the scientific community or our safety report systems until four months into the vaccine rollout. We are still learning about how this manifests in vaccinated males. In general severe myocarditis can lead to scarring, and even cause death, so it must be taken seriously and followed long term. Right now, Paul Offit, professor of vaccinology at the University of Pennsylvania, says that most cases are mild and resolve on their own. The actual FDA approval for the Pfizer vaccine acknowledges higher rates of myocarditis and pericarditis in males now, and states the obvious: “Information is not yet available about potential long-term health outcomes. The Comirnaty [the new name for the Pfizer vaccine] Prescribing Information includes a warning about these risks.” An Israeli study found that, in boys aged 12-15, myocarditis occurred in only 162 cases out of a million, but this rate was 4-6 times higher than their chances of being hospitalized for a severe case of COVID.

But, to get a sense of the complexity of the decision facing parents, in the United States the situation keeps changing, with more and more cases of children now showing up in hospitals for COVID. The decision is further complicated by the crucial fact that COVID can cause myocarditis as well. And we are just now learning that different vaccines seem to cause myocarditis at different rates. As of October, several countries— including Norway, Sweden, and Denmark—have put the Moderna vaccine (which is especially potent) on pause for younger people, and Iceland has suspended it for all ages. But these countries are not ending childhood vaccination, just recommending different vaccines. We are lucky to have options. But we could use good studies comparing the COVID-induced myocarditis rates and vaccine-induced myocarditis rates by age and sex.

Which is why it’s so unfortunate that the RCTs were not much larger, and that they didn’t go on longer. Had they continued, and if their data ever became transparent, it could really help us in assessing long-term safety in a more reassuring way—that’s what RCTs are good at. One can more persuasively demonstrate that a vaccine doesn’t have these effects if there is a proper vaccine-free, COVID-free control group. But if vaccines continue to be pushed as the one and only answer, we will never know if certain health problems emerge, because there will be no “normal” vaccine-free group left for comparison. It’s a development that is quite disconcerting, for it suggests a wish not to know.

When the pandemic first broke, many were certain that the developing countries—with their inability to afford vaccines, malnutrition, crowded cities, and lower numbers of health care workers—would be universally devastated. But that prediction turned out not to be true.

The population of Ethiopia is about 119 million—just over one-third of the United States. COVID vaccination rates are very low there: 2.7% have had at least one shot, 0.9% have had two. As of Sept. 28, 2021, the country recorded only 5,439 COVID deaths over the course of the entire pandemic. If the United States had such a death rate per capita, it would have lost just over 16,000 people, rather than over 700,000.

Why does Ethiopia have such comparatively low numbers? It was not that the country was late to the pandemic. It recorded its first case in March 2020. It had three comparatively small “waves” in July and August 2020, April 2021, and most recently in August and September 2021. During these “waves,” the daily deaths averaged about 37, 47, and 48 people a day. The country had very brief lockdowns in select harder-hit towns at the beginning of the pandemic, and brief periods during which large gatherings, schools, stadiums, and nightclubs were closed. Then, during the second wave of April 2021, hospital capacity and oxygen supplies were stretched.

But by June 2021, Ethiopian physician friends with whom I was in weekly contact told me that they could see the second wave receding, as numbers were decreasing and hospital occupancy with COVID cases was going down. All this occurred with only about 1% of the country vaccinated (mostly the country’s health care workers, the elderly in key hot spots, and the vulnerable). Now, the third wave appears to be receding, especially in the capital, Addis Ababa. The Ethiopian physicians I know, extremely skilled, are also more accustomed to serious infectious disease than many Western physicians, and have a different attitude toward herd immunity. When they saw that death counts were low compared to other countries, they didn’t advocate to keep the country closed, observing, as one put it, “it’s running through, taking its natural course, and lockdowns will only delay resolution.”

For part of the COVID period there has been armed conflict in one Ethiopian province, which could be affecting the numbers. Still, how are numbers anywhere close to this low even possible, and what might be learned? Interestingly, neighboring Kenya also reports a similarly low death rate. Clearly, what determines the death count in at least some countries is far more than vaccination rates. There is the average age of the population (in Ethiopia, the median age is 19.5 years; in the United States, 38.3), population density (Ethiopia is about 80% rural), travel within the country (Ethiopians rarely travel outside their own province, or far from their villages), ventilation (most Ethiopians live in thatched huts, and even in the cities, homes are draftier and more open), sun exposure (hence vitamin D levels protected), exercise (Ethiopians are always walking, with three cars per 1,000 people), and possible seasonal effects. They also had fewer lockdowns, and so may have more natural immunity. Crucially, levels of obesity, being overweight, and Type 2 diabetes are almost nonexistent in Ethiopia, but epidemic in the United States, the U.K., and Australia.

Staggeringly, none of these factors is even mentioned in the master narrative, yet their cumulative potency in protecting a population seems, in Ethiopia, for the last 18 months until now, to have been very protective. A study of 160 countries in 2020 showed that the risk of death from COVID is 10 times higher in countries like the United States where the majority of the population (67.9%) is overweight. CDC data shows that a whopping 78% of all hospitalized cases in the United States, and therefore those most at risk of death, were overweight or suffered from obesity. By lowering immunity, obesity increases the chance of severe illness, and also decreases vaccine efficacy, as has been shown with the flu vaccine.

Another key element left out of America’s master narrative is the role of natural immunity. After 18 months of near total silence about it, Fauci was asked by CNN’s Sanjay Gupta about a study that showed natural immunity provides a lot of protection, better than the vaccines alone. Gupta asked Fauci if people who already had COVID needed to get vaccinated. “I don’t have a really firm answer for you on that,” Fauci said, “that’s something we are going to have to discuss ….” Instead, the U.S. administration and media still maintain, with a kind of ideological fervor, that everyone must get vaccinated, even the already immune. On the face of it, this is a strange assumption, because vaccines work by triggering our preexisting immune system, and by exposing it to part of the virus. If our bodies can’t produce good immunity by exposure to the virus, they won’t usually be able to produce it by exposure to a vaccine (which happens in immune-compromised people all the time). Vaccine immunity relies on the body’s ability to produce natural immunity.

An epidemiologist named Dr. Martin Makary of Johns Hopkins University showed that about half of unvaccinated Americans have been exposed to the virus, and are therefore already immune: By December 2020 over 100 million Americans had been exposed to the virus, and 120 million by Jan. 31, according to a Columbia University study. Now, 10 months later, with the more infectious delta variant, the number is probably closer to 170 million, or half the country. Are the immune unvaccinated safe for others to be around? According to Makary, we have more than 15 studies showing that natural immunity is very strong, and lasts a long time—so far the length of the entire pandemic—and it is effective against the new variants. The reinfection rate for someone who had COVID was shown to be 0.65% (in a Danish study) or 1% (in a British study, and some others). A number of studies suggest it may last for years; even when antibodies go down, cells in the marrow are ready to produce them.

There is one CDC study often used to justify vaccinating the already immune, but it is an outlier. To its credit, the study begins by stating that “few real-world epidemiological studies exist to support the benefit of vaccination for previously infected persons.” It then purports to show that COVID vaccine immunity is 2.3 times as protective as natural immunity, based on a single two-month study from Kentucky. Makary says the study was “dishonest,” and asks why the CDC chose just two months of data to evaluate, when it had 19 months’ worth on hand, and “why one state when you have 50 states?” But perhaps the key weakness, as Harvard’s Martin Kulldorff points out, is they used a positive PCR test to measure whether someone was infected, and not whether the person actually experienced a symptomatic infection—the key point. The problem with the PCR test is it is good at detecting viral RNA, but can’t distinguish whether the materials are intact particles, which are infectious, or merely degraded fragments, which are not.

But when actual symptomatic infection has been looked at, natural immunity comes out better. A huge Israeli study of about 76,000 people—the largest on the subject—has compared the rate of symptomatic reinfection in those who had been vaccinated (the “breakthrough” infection rate) with the symptomatic reinfection rate of those who had COVID. The data has been circulated (though not yet peer reviewed) and it is consistent with other studies showing better protection for the previously infected. It found that people who had a previous COVID infection and beat it with natural immunity in January or February 2021 were 27 times less likely to get a symptomatic reinfection than those who got immunity from the vaccine. A Washington University study showed that even a mild infection gives long-lasting immunity. Along with Makary of Johns Hopkins, among those on record willing to question the need for vaccination of the already immune are Drs. Kulldorff (the Harvard epidemiologist), Vinay Prasad (a hematologist-oncologist and associate professor of epidemiology and biostatistics at the University of California San Francisco), Harvey Risch (a Yale epidemiologist), and Jayanta Bhattacharya (a Stanford epidemiologist).

Offit, who is on the FDA Vaccine Advisory Committee, is an interesting case, as he both argues for mandates but concedes that it’s reasonable for the already immune to not want to be vaccinated. Asked by the pro-vaccine Zubin Damania, a Stanford-trained internist who goes by the pseudonym ZDoggMD on his viral interview show, what he would say to someone who asks: “Why should I be forced, compelled, mandated to get a vaccine when I have gotten natural COVID?” Offit answered, “I think that’s fair. I think if you’ve been naturally infected, it’s reasonable that you could say, ‘Look, I believe I am protected based on studies that show I have high frequencies of memory plasmablasts in my bone marrow. I’m good,’ I think that’s a reasonable argument.” The problem, as Offit noted in another interview, “is that bureaucratically it’s a nightmare.”